InfoQ interview up

There’s an interview with me at InfoQ. It covers micro-scale retro-futurist anarcho-syndicalism and my hypothesis that we could chisel value away from automating business-facing examples and add it to cheaper activities.

There’s an interview with me at InfoQ. It covers micro-scale retro-futurist anarcho-syndicalism and my hypothesis that we could chisel value away from automating business-facing examples and add it to cheaper activities.

The value of programmer TDD is well established. It’s natural to extrapolate that practice to business-facing tests, hoping to obtain similar value. We’ve been banging away at that for years, and the results disappoint me. Perhaps it would be better to invest heavily in unprecedented amounts of built-in support for manual exploratory testing.

In 1998, I wrote a paper, “When should a test be automated?“, that sketched some economics behind automation. Crucially, I took the value of a test to be the bugs it found, rather than (as was common at the time) how many times it could be run in the time needed to step through it manually.

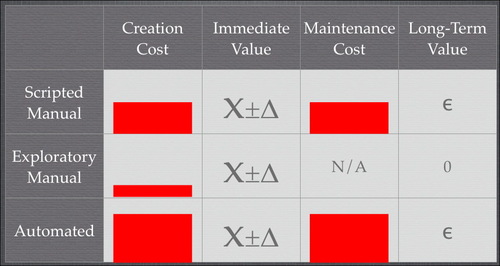

My conclusions looked roughly like the following:

Scripted tests, be they automated or manual, are expensive to create (first column). Manual scripts are cheaper, but they still require someone to write steps down carefully, and they likely require polishing before they can truly be followed by someone else. (Note: height of bars not based on actual data.)

In the second column, I assume that a particular set of steps has roughly the same chance of finding a bug whether executed manually or by a computer, and whether the steps were planned or chosen on the fly. (I say “roughly” because computers don’t get bored and miss bugs, but they also don’t notice bugs they weren’t instructed to find.)

Therefore, if the immediate value of a test is all that matters, exploratory manual testing is the right choice. What about long-term value?

Assume that exploratory tests are never intentionally repeated. Both their long-term cost and value are zero. Both kinds of scripted tests have quite substantial maintenance costs (especially in that era, when testing was typically done through an unmodified GUI). So, to pull ahead of exploratory tests in the long term, scripted tests must have substantial bug-finding power. Many people at that time observed that, in fact, most tests either found a bug the first time they were run or never found a bug at all. You were more likely to fix a test because of an intentional GUI change than to fix the code because the test found a bug.

So the answer to “when should a test be automated?” was “not very often”.

Programmer TDD changes the balance in two ways:

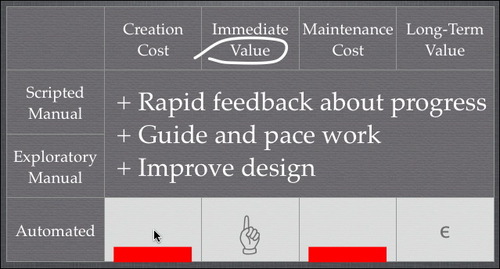

New sources of value are added. Extremely rapid feedback reduces the cost of debugging. (Most bugs strike while what you did to create them is fresh in your mind.) Many people find the steady pace of TDD allows them to go faster, and that the incremental growth of the code-under-test makes for easier design. And, most importantly as it turns out, the need to make tests run fast and reduce maintenance cost leads to designs with good properties like low coupling and high cohesion. (That is, properties that previously were considered good in the long term—but were routinely violated for short-term gain—now had powerful short-term benefits.)

Good design and better programmer tools dramatically lowered the long-term cost of tests.

So, much to my surprise, the balance tipped in favor of automation—for programmer tests. It’s not surprising that many people, including me, hoped the balance could also tip for business-facing tests. Here are some of the hoped-for benefits:

Tests might clarify communication and avoid some cases where the business asks for something, the team thinks they’ve delivered it, and the business says “that’s not what I wanted.”

They might sharpen design thinking. The discipline of putting generalizations into concrete examples often does.

Programmers have learned that TDD supports iterative design of interfaces and behavior. Since whole products are also made of interfaces and behavior, they might also benefit from designers who react to partially-finished products rather than having to get it right up front.

Because businesses have learned to mistrust teams who show no visible progress for eight months (at which point, they ask for a slip), they might like to see evidence of continuous progress in the form of passing tests.

People often need documentation. Documentation is often improved by examples. Executable tests are examples. Tests as executable documentation might get two benefits for less than their separate costs.

And, oh yeah, tests could find regression bugs.

So a number of people launched off to explore this approach, most notably with Fit. But Fit hasn’t lived up to our hopes, I think. The things that particularly bother me about it are:

It works well for business logic that’s naturally tabular. But tables have proven awkward for other kinds of tests.

In part, the awkwardness is because there are no decent HTML table editors. That inhibits experimentation: if you don’t get a table format right the first time, you’re tempted to just leave it.

Note: I haven’t tried ZiBreve. By now, I should have. I do include Word, Excel, and their OpenOffice equivalents among the ranks of the not-decent, at least if you want executable documentation. (I’ve never tried treating .doc files as the real tests that are “compiled” into HTML before they’re executed.)

Fit is not integrated into programmer editors the way xUnit is. For example, you can’t jump from a column name to the Java method that defines it. Partly for this reason, programmers tend to get impatient with people who invent new table formats—can’t they just get along with the old one?

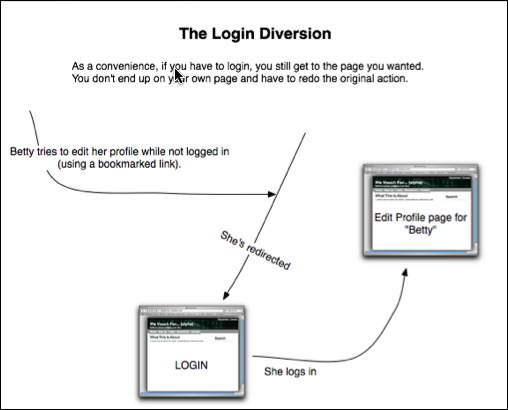

With my graphical tests, I took aim at those sources of friction. If I have a workflow test, I can express it as boxes and arrows:

I translate the graphical documents into ordinary xUnit tests so that I can use my familiar tools while coding. The graphical editor is pretty decent, so I can readily change tests when I get better ideas. (There are occasional quirks where test content has changed more than it looks like it has. That aspect of using Fit hasn’t gone away entirely.)

I’ve been using these tests, most recently on wevouchfor.org—and they don’t wow me.  While I almost always use programmer TDD when coding (and often regret skipping it when I don’t), TDD with these kinds of tests is a chore. It doesn’t feel like enough of the potential value gets realized for the tests to be worth the cost.

While I almost always use programmer TDD when coding (and often regret skipping it when I don’t), TDD with these kinds of tests is a chore. It doesn’t feel like enough of the potential value gets realized for the tests to be worth the cost.

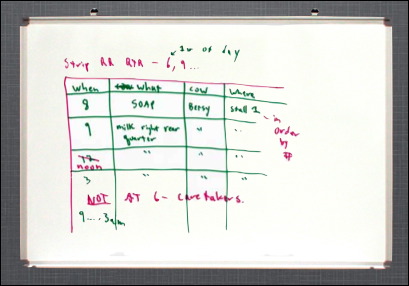

↓ Writing the executable test doesn’t help clarify or communicate design. Let me be careful here. I’m a big fan of sketching things out on whiteboards or paper:

That does clarify thinking and improve communication. But the subsequent typing of the examples into the computer is work that rarely leads to any more design benefits.

↔ Passing tests do continuously show progress to the business, but… Suppose you demonstrate each completed story anyway, at an end-of-iteration demo or (my preference) as soon as it’s finished. Given that, does seeing more tests pass every day really help?

↑ Tests do serve as documentation (at least when someone takes the time to surround them with explanatory text, and if the form and content of the test aren’t distorted to cram a new idea into existing test formats).

↑ The word I’m hearing is that these tests are finding bugs more often than I expected. I want to dig into that more: if they’re the sort of “I changed this thing over here and broke that supposedly unrelated thing over there” bugs that whole-product regression tests are traditionally supposed to find, that alone may justify the expense of test automation—unless I can find a way to blame it on inadequate unit tests or a need to rejigger the app.

↓ (This is the one that made me say “Eureka!”) Tests alone fail at iterative product design in an interesting way. Whenever I’ve made significant progress implementing the next chunk of workflow or other GUI-visible change, I just naturally check what I’ve done through the GUI. Why? This checking makes new bugs (ones the automated tests don’t check for) leap out at me. They also sometimes make me slap my forehead and say, “What I intended here was stupid!”

But if I’m going to be looking at the page for both bugs and to change my intentions, I’m really edging into exploratory testing. Hmm… What if an app did whatever it could to aid exploratory testing? I don’t mean traditional testability features like, say, a scripting interface; I mean a concerted effort to let exploratory testers peek and poke at anything they want within the app. (That may not be different than my old motto “No bug should be hard to find the second time,” but it feels different.)

So, although features of Rails like not having to restart the server after most code changes are nice, I want more. Here’s an example.

The following page contains a bug:

Although you can’t see it, the bottom two links are wrong. They are links to /certifications/4 instead of /promised_certifications/4.

Unit tests couldn’t catch that bug. (The two methods that create those types of links are tested and correct; I just used the wrong one.)

One test of the action that created the page could have caught the bug, but did not. (To avoid maintenance problems, that test checked the minimum needed to convince me that the correct “certifications” had been displayed. I assumed that if they were displayed at all, the unit tests meant they were displayed correctly. That was actually almost right—every character outside the link’s href value was correct.)

I missed the bug when I checked the page. (I suspect that I did click one of the links, but didn’t notice it went to the wrong place. If so, I bet I missed the wrongness because I didn’t have enough variety in the test data I set up—ironic, because I’ve been harping on the importance of “irrelevant” variety since 1994.)

A user had no trouble finding the bug when he tried to edit one of his promised certifications and found himself with a form for someone else’s already-accepted certification. (Had he submitted the form, it would have been rejected, but still.)

That’s my bug: a small error in a big pile of HTML the app fired and forgot.

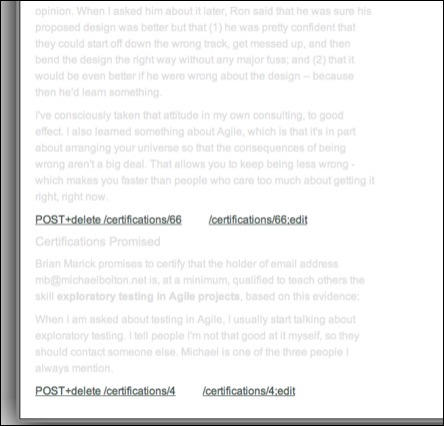

Suppose, though, that the app created and retained an object representing the page. Suppose further that an exploration support app let you switch to another view of that object/page, one that highlights link structure and downplays text:

To the eyes of someone who just added promised certifications to that page, the wrong link targets ought to jump out.

There’s more that I’d like, though. The program knows more about those links than it included in the HTTP Response body. Specifically, it knows they link to a certain kind of object: a PromisedCertification. I should be able to get a view of that object (without committing to following the link). I should be able to get it in both HTML form and in some raw format. (And if the link-to-be-displayed were an object in its own right, I would have had a place to put my method, and I wouldn’t have used the wrong one. Testability changes often feed into error prevention.)

And so on… It’s easy enough for me to come up with a list of ways I’d like the app to speak of its internal workings. So what I’m thinking of doing is grabbing some web framework, doing what’s required to make it explorable, using it to build an app, and also building an exploration assistant in RubyCocoa (allowing me to kill another bird with this stone).

To be explicit, here’s my hypothesis:

An application built with programmer TDD, whiteboard-style and example-heavy business-facing design, exploratory testing of its visible workings, and some small set of automated whole-system sanity tests will be cheaper to develop and no worse in quality than one that differs in having minimal exploratory testing, done through the GUI, plus a full set of business-facing TDD tests derived from the example-heavy design.

We shall see, I hope.

A large number of the submissions to the Agile2008 Examples stage do not use examples to clarify or explain. As a result, they are too vague to evaluate.

And people wonder why I despair.

On the agile-testing list, I answered this question:

So for the first time in many, many years I’m not in a test management position, and I’m writing tests, automating them, etc. We’re using a tool called soapUI to automate our web services testing–it’s a handy tool, supports Groovy scripting which allows me to go directly to the DB to validate the results of a given method, etc. One feature of soapUI is centralized test scripts; I can create helper scripts to do a bunch of stuff–basically, I write the Groovy code I need to validate something and then I often find I’m moving it into a helper function, refactoring, etc.. My question is, how do you know the right balance between just mashing up the automation (ie, writing a script as an embeded test script) vs. creating the helper function and calling it?

Bret Pettichord suggested I blog my answer, so here it is:

I use these ideas and recommend them as a not-bad place to start:

At the end of every half-day or full-day of on-task work (depending on my mood), I’ll spend a half an hour cleaning up something ugly I’ve encountered.

I’ll periodically ask myself “Is it getting easier to write tests?” If it’s not, I know I should slow down and spend more effort writing helper code. I know from experience what it feels like when I’ve got good test support infrastructure—how easy writing tests can be—so I know what to shoot for.

I have this ideal that there should be no words in a test that are not directly about the purpose of the test. So: unless what the test tests is the process of logging in, there should be no code like:

create_user "sam", "password"

login "sam", "password"

...

Instead, the test should say something shorter:

using_logged_in_user "sam"

...

That tends to push you toward more and smarter helper functions.

Unsurprisingly, I often fall short of that ideal. But if I revisit a test and have any trouble at all figuring out what it’s actually testing, I use that as a prod to make the extra effort.

What was something of a breakthrough for me was realizing that I don’t have to get it right at this precise moment. Especially at the beginning, when you don’t have much test infrastructure, stopping every twelve seconds to write that helper function you ought to have completely throws you out of the flow of what you’re trying to accomplish. I’ve gotten used to writing the wrong code, then fixing it up later: maybe at the end of the task, maybe not until I stumble across the ugliness again.

Adam Geras and I will be “producers” for this “stage” at Agile2008. Here are some notes.

I’m imagining a single room for the duration of the conference. We won’t have a huge number of sessions. (Problem: This stage has a specialized topic and few sessions. It is not the Testing Stage, though it’s a natural home for many types of testing sessions. Where do all the other worthy testing submissions go?)

Only the first half of the stage will have prescheduled sessions. The second half will be devoted to “repeat and request”.

Repeat: Since there will be many simultaneous events at the conference, some people will hear about a great Example session after it’s too late to go. Within our room, we’ll have flipcharts upon which people can write “Please repeat session X!” and “Yeah, please!” If there’s enough interest and a willing session organizer, the session will be repeated.

Request: We’ll have quite a few people floating around the conference who can show examples of technique X (not necessarily a testing technique). We’ll have more whiteboards where people can request sessions built around examples of techniques. This is somewhat like Open Space, but oriented toward showing rather than telling. For example, I’d be happy to show my graphical workflow tests in action—if people want to see it.

Take that a step further: I had been thinking of two types of submission: one that comes into the submission system while we passively wait, and one where we actively invite specific people to enter something into the system (or, possibly, simply invite them to come and do their thing). But why should our paying audience wait to see what other people want to push at them. I want our audience to pull by proposing sessions they’d like someone else to perform. Then it’s the producers’ job to find people who can satisfy the demand.

For example, suppose some people think a session on improv would be good, and they make that argument <somewhere>. Then it becomes my task to contact the three or four people I know who’ve done improv and get them to work up a proposal. (In fact, I’m going to do this without prompting.)

Another idea is “Reality Theatre.” As I’ve mentioned before, many groups can’t envision what a good standup or planning meeting is like, not just by reading descriptions in books. Similarly, the buzz and activity inside a good Agile team is palpable but hard to describe in print or by waving your hands around as you talk. So I would like the Example room to hold the world premieres of short documentaries of actual teams doing actual things. (Real video, edited, with expert commentary.) We may be able to provide some production assistance. Maybe we can get a company to donate prizes.

So that’s what I’m looking for: nothing that’s a safe bet. Watch this space for more.

At the functional testing tools workshop, Ward Cunningham devised a scheme. John Dunham, Adam Geras, Naresh Jain, and I elaborated on it. Before I explain the scheme, I have to reveal the context, which is different kinds of tests with different audiences and affordances:

|

|

This is your ordinary xUnit or scripted test. The test is at the top, the program’s in the middle, and the results are at the bottom. In this case, the test has failed, which is why the results are red. The scribbly lines for both test and results indicate that they’re written in a language hateful to a non-technician (like a product owner or end user). But you’d never expect to show such a person failing results, so who cares? |

|

|

Here’s the same test, passing. The output is just a binary signal (perhaps a green bar in an IDE, perhaps a printed period from an xUnit TextRunner). A product owner shouldn’t care what it looks like. If she trusts you, she’ll believe you when you say all the tests pass. If she doesn’t, why should she believe a little green bar? |

|

|

Here’s a representation of a Fit test. Both the input and output are in a form pleasing to a non-technician. But, really, having the pleasant-looking results isn’t worth much. It makes for a good demo when showing off the tool, but does a product owner really care to see output that’s the input colored green? It conveys no more information than a green bar and depends on trust in exactly the same way. (James Shore made this point well at the workshop.) |

|

|

Here’s one of my graphical tests. The input is in a form pleasing to a non-technician. In this case, the test is failing. The output is the same sort of gobbledegook you get from xUnit (because the test is run by xUnit). Again, the non-technician would never see it. |

|

|

Here’s the same test, passing. My test framework doesn’t make pretty results, because I don’t think they have value worth the trouble. |

|

|

Here is one of Ward’s “swimlane” tests. The test input (written in PHP) would be unappealing to non-technicians, but they never see it. This test fails, and so it produces nothing a non-technician would want to see. |

|

|

Here’s the passing test. The output is a graphical representation of how the program just executed. It is both understandable (after some explanation) and explorable (because there are links you can click that take you to other interesting places behind the curtain.) |

As when I first encountered wikis, I missed the point of Ward’s newest thing. I didn’t think swimlane tests had anything to do with what I’m interested in: the use of tests and examples as tools for thinking about a piece of what the product owner wants with results that help the programmers. With swimlane tests, the product owner is going to be mostly reactive, saying “these test results are not what I had in mind”.

What I missed is that Ward’s focus wasn’t on driving programming but on making the system more visible to its users. They were written for the Eclipse Foundation. It wants to be maximally transparent, and the swimlane tests are documentation to help the users understand how the Foundation’s process works along with assurance that it really does work as documented.

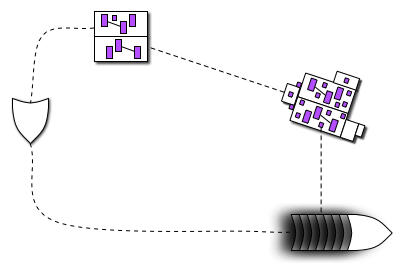

What Ward noticed is that his output might fit together nicely with my input. Like this:

Here, the product owner begins with an executable graphical example  of what she wants. The programmers produce code

of what she wants. The programmers produce code  from it. As a user runs

from it. As a user runs  that code, she can at any moment get a bundle of pictures

that code, she can at any moment get a bundle of pictures  that she can point at during a conversation with the producer and other users. That might lead to some new driving examples

that she can point at during a conversation with the producer and other users. That might lead to some new driving examples  .

.

The point of all of this? It’s a socio-technical trick that might entice users to collaborate in application development the way wikis entice readers to collaborate in the creation of, say, an encyclopedia. It’s a way to make it easier for markets to really be conversations. John Dunham dubbed this “crowdware”.

It won’t be quite as smooth as editing a wiki. First, as Naresh pointed out, the users will be pointing at pictures of a tool in use, and they will likely talk about the product at that level of detail. To produce useful driving examples (and software!), talk will have to be shifted to a discussion of activities to be performed and even goals to be accomplished. The history of software design has shown that we humans aren’t fantastically good at that. Adding more unskilled people to the mix just seems like a recipe for disasterware.

Wikis seemed crazy too, though, so what the heck.

Laurent Bossavit and I are working on a site. Once it reaches the minimal features release, I’ll add some crowdware features.

From Keith Braithwaite: a discussion of gauges as a metaphor:

http://peripateticaxiom.blogspot.com/2007_07_01_archive.html

http://peripateticaxiom.blogspot.com/2007/09/gauges.html

It’s nice.

I still like examples as a metaphor, but if it hasn’t caught on in four years and a month, it’s not gonna.

Every word in the example should be about what the example is trying to show.

The intended audience is a human being asking first “How do I…?” and then “What happens when…?”

Possibilities should be grouped so you can see them all at once.

Agile 2008 will be arranged around the metaphor of a music festival. There will be a main stage for the big-draw speakers, the larger tutorials for novices, etc.

I was asked to do a stage about testing that wouldn’t help shunt people into silos. (It shouldn’t be “the testing mini-conference”.) I decided the stage would take seriously the usefulness of explicit, concrete examples—executable or no—in the thinking about, construction, and post-construction investigation of software-ish things. Hence the logo:

Agile Alliance Functional Testing Tools Visioning Workshop

Call for Participation

Dates: October 11 - 12, 2007

Times: 8 AM - 5 PM

Location: Portland, Oregon

Venue: Kennedy School

Description

The primary purpose of this workshop is to discuss cutting-edge advancements in and envision possibilities for the future of automated functional testing tools.

This is a small, peer-driven, invitation-only conference in the tradition of LAWST, AWTA, and the like. The content comes from the participants, and we expect all participants to take an active role. We’re seeking participants who have interest and experience in creating and/or using automated functional testing tools/frameworks on Agile projects.

This workshop is sponsored by the Agile Alliance Functional Testing Tools Program. The mission of this program is to advance the state of the art of automated functional testing tools used by Agile teams to automate customer-facing tests.

There is no cost to participate. Participants will be responsible for their own travel expenses. (However, we do have limited grant money available to be used at the discretion of the organizers to subsidize travel expenses. If you would like to be considered for a travel grant, please include your request, including amount needed, in your Request for Invitation.)

(more…)